Why Data Center Cooling Is the New AI Bottleneck

AI models are exploding in size and compute demand. Every extra FLOP generates heat, and overheating can throttle performance or even cause hardware failures. Traditional cooling systems, designed for predictable workloads, struggle to keep up with the unpredictable spikes of modern AI training clusters.

Startup DNA Meets Cisco‑Scale

Imagine a company that thinks like a scrappy startup—fast, innovative, and customer‑obsessed—while having the manufacturing depth and global support of Cisco. That blend creates a powerful platform for re‑imagining data‑center cooling.

Key Characteristics of the Hybrid Approach

- Rapid Prototyping: Small, cross‑functional teams develop modular cooling modules in weeks, not months.

- Mass‑Production Reliability: Leveraging Cisco‑grade supply chains ensures each module meets rigorous quality standards.

- Open Architecture: APIs and standards‑based interfaces allow seamless integration with existing DC management software.

Core Technologies Driving the Transformation

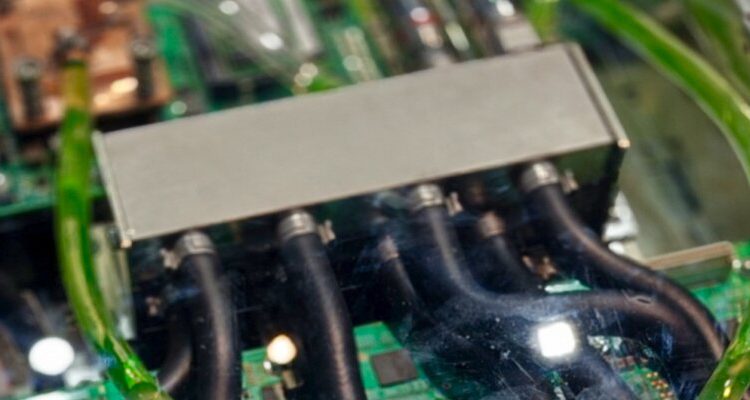

1. Direct‑to‑Chip Liquid Cooling

Coolant flows directly over the processor package, removing heat at the source. Benefits include:

- Up to 40% reduction in power‑usage‑effectiveness (PUE).

- Higher sustained GPU clock speeds for AI training.

- Lower acoustic noise compared with traditional air‑flow fans.

2. AI‑Powered Thermal Management

Machine‑learning models predict hotspot formation minutes before it happens, adjusting coolant flow rates and fan speeds in real time. This predictive control:

- Prevents thermal throttling.

- Optimizes energy consumption.

- Extends hardware lifespan.

3. Modular Heat‑Exchange Pods

Instead of a monolithic chill‑water system, the solution uses plug‑and‑play pods that can be added or removed as compute density changes. The pods are:

- Pre‑tested for leak‑free operation.

- Stackable for vertical rack deployments.

- Compatible with both warm‑water and chilled‑water loops.

Implementation Roadmap for Data Center Operators

- Assess Current PUE: Measure baseline efficiency to identify cooling‑related waste.

- Pilot a Single Rack: Deploy a modular liquid‑cooling pod on a high‑density AI rack to evaluate performance.

- Integrate AI Controls: Connect sensors to a cloud‑based analytics platform for predictive thermal management.

- Scale Across the Facility: Roll out pods rack‑by‑rack, leveraging Cisco‑grade logistics for fast delivery and installation.

Business Impact

Operators report up to 30% lower total‑cost‑of‑ownership (TCO) for AI workloads, while sustainability teams celebrate a 20% drop in carbon emissions due to reduced energy demand. The startup‑scale hybrid model also shortens time‑to‑market for new cooling innovations, keeping data centers competitive in the fast‑moving AI era.

Future Outlook

As AI models become even larger, cooling will evolve from a supporting function to a strategic differentiator. Expect tighter integration of cooling hardware with AI orchestration platforms, edge‑ready liquid‑cooling modules, and even smarter coolant fluids that change viscosity on demand.

Conclusion

By marrying the agility of a startup with the production muscle of Cisco, the next generation of data‑center cooling delivers the efficiency, reliability, and performance AI workloads demand. Data center leaders who adopt this hybrid approach will not only cut costs and carbon footprints but also unlock the full potential of AI innovation.

Comments are closed, but trackbacks and pingbacks are open.